Skyhawk recently announced a couple of new features that are based on ChatGPT. What’s new?

Watch this video and then read the blog for details:

- A new addition to our scoring mechanisms for malicious events called ‘Threat Detector’.

We use the ChatGPT API as an “advisor” to help us be more confident about our scoring mechanism. Our current scoring mechanism has several of these kinds of rules and machine learning based classifiers that can be thought of as advisors, and each one of them takes the score into another direction – but the ML models eventually use all of them to decide on the level of threat of an event. Skyhawk’s new ChatGPT functionality features “countless” new advisors whose opinions we consider in our final scoring mechanism, one that is proficient and smart because it is based on the security data of the whole internet.

- A new tab in our product called ‘Security Advisor’.

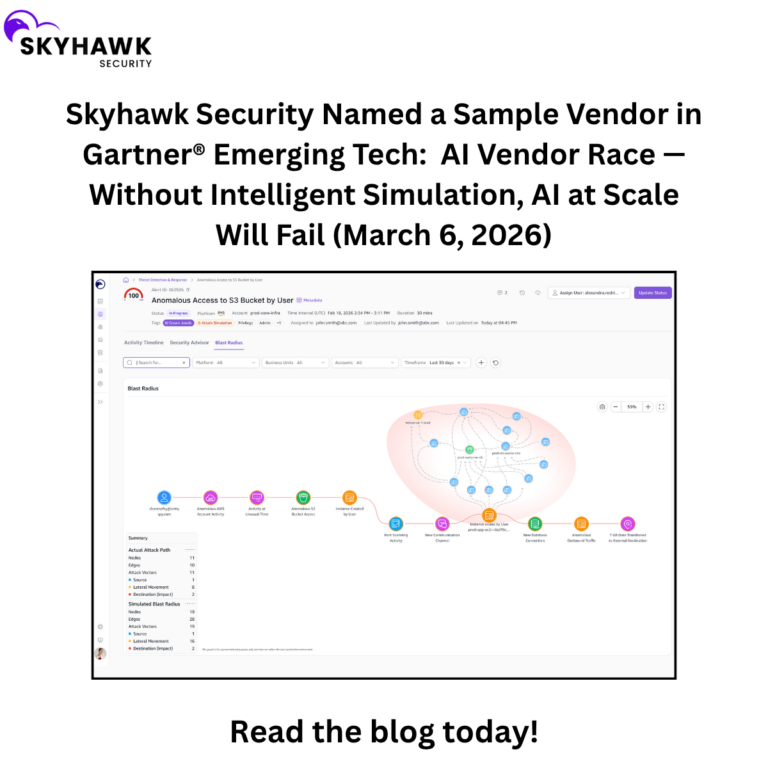

Skyhawk adds textual explanations (produced by ChatGPT) for the incidents found by the platform. These appear in a new platform tab called the ‘Security Advisor’. Having these textual explanations, in addition to visual representations, helps organizations understand incidents in greater depth and makes them more accessible to security personnel.

How does Chat-GPT help with scoring an Attack Sequence?

Our product uses ML to score security events and track them on a timeline called an “Attack Sequence”. We use Machine Learning labeling functions to “ask questions” about each potential event, and then score those events using proprietary information that we have gathered about security events (as well as the MITRE framework and other contextual components). Each advisor that we use scores whether an event is suspicious or not, and then we aggregate all the advisors’ results to create the Attack Sequence. Now, we’re adding another very strong advisor, that helps us to improve the detection rate and the speed of detection.

How does GPT help?

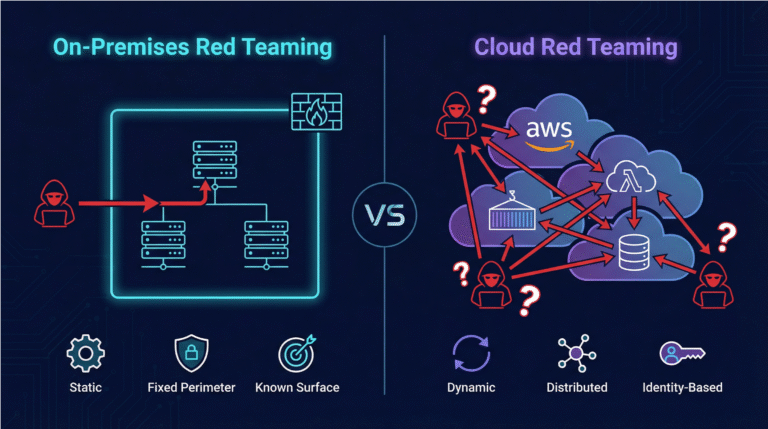

GPT is trained on reams of security data from across the web. For Skyhawk, it adds yet another point of view that we may not have thought of ourselves – a sort of unknown unknown. It allows us to assess what is considered risky and malicious based on different reports that GPT found on the web. And that gives us more confidence that we’re not missing anything. Because up until now, all these labeling functions of our advisors were actually code that we wrote ourselves, and now we add GPT results – a black box that acts as a sort of super-advisor.

Can you give an example?

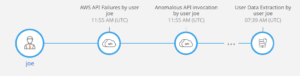

Below is a real sequence where Skyhawk was able to alert just before the user actually performed a data extraction. However, GPT raised the flag after the very first activity of the sequence which means that we were able to avoid the data extraction by alerting on this much earlier than before, and of course much earlier than any other product on the market.

In this image the ‘AWS API failure’ is something that is, while we identified it as is malicious, it’s not yet harmful. So most security products will either not alert, or alert but it will be ignored as something that is not necessarily threatening. But GPT, together with our MBI for this activity, created the confidence to alert the customer that this is a true alert (what we call a Realert).

What is the benefit of our ChatGPT functionality for Skyhawk customers?

The benefit is better security for cloud infrastructure. Security tools need to be as accurate as possible so that we have more alerts that are real and fewer that are that are false positives. The Chat-GPT API adds a layer of confidence because in our tests it found true malicious activity that led to a breach (in 78% of the cases we tested) earlier than without the Chat-GPT data.

It’s as if we take the advice of thousands of security researchers and average them, using the wisdom of the crowds to gain confidence on when to alert customers. This way they can pay attention only to events that are real threats and ignore the rest.

The addition of Chat-GPT scoring allowed us, in 78% of the cases, to alert earlier than we would have with our own baseline score.

Want to learn more? please join the upcoming webinar on April 25th at noon EST by registering here.